Have you ever had to sort through HuggingFace to find your best model ? There are over 54,000 models on HuggingFace! So it’s not an easy task.

Most people just choose the most popular model–and this is usually BERT. Or some BERT variant. Bert was created by Google, so it must be good.

But is BERT the really best choice for you ?

How can you find out ? You can search through the literature, read blogs, ask on Reddit, etc, and try to find a better model. This is time consuming and imperfect. Fortunately, there is a better way.

The weightwatcher tool can tell you.

WeightWatcher is an open-source, data-free diagnostic tool that can estimate the quality of an DNN model like BERT, GPT, etc–without needing any data! (No training or test data–just the weights). It has been featured in JMLR, at ICML and KDD, and even in Nature.

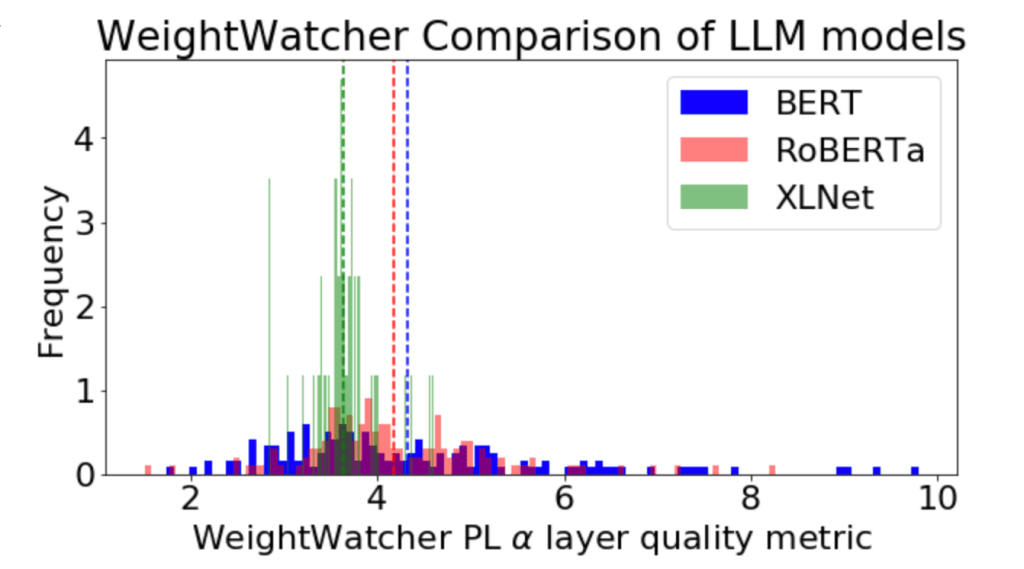

Here’s an example using weightwatcher to compare of 3 NLP models: BERT, RoBERTa, and XNLet

The WeightWatcher Power-Law (PL) metric alpha is a DNN model quality metric; smaller is better. This plot above displays all the layer alpha

values for the 3 models. It is immediately clear that the XNLet layers look much better than BERT or RoBERTa; the alpha

values are smaller on average, and there are no alphas larger than 5:

. In contrast, the BERT and RoBERTa alphas are much larger on average, and both models have too many large alphas.

This is totally consistent with the published results.: In the original paper (from Microsoft Research), XLNet outperforms BERT on 20 different NLP tasks.

Do it yourself:

WeightWatcher will work with any HuggingFace Transformer (or CV) model.

Here is a Google Colab notebook that lets you reproduce this yourself

Give it a try. And if you need help with AI, ML, or just Data Science, please reach out. I provide strategy consulting, data science leadership, and hands-on, heads-down development. I will have availability in Q3 2022 for new projects. Reach out today. #talkToChuck #theAIguy