Weighwatcher is an open-source Model Monitoring tool that provides Data-Free Diagnostics for production-quality Deep Neural Networks (DNNs). It. can tell you if your model is over-trained or over-parameterized. And it can it tell you which layers are over-trained or under-trained (over-parameterized). And all without needing training or test data.

Let’s see how to do this. First, install the tool.

pip install weightwatcher

Second, pick a model and get a basic description of it

import weightwatcher as ww

watcher = ww.WeightWatcher(model=my_model)

details = watcher.analyze()

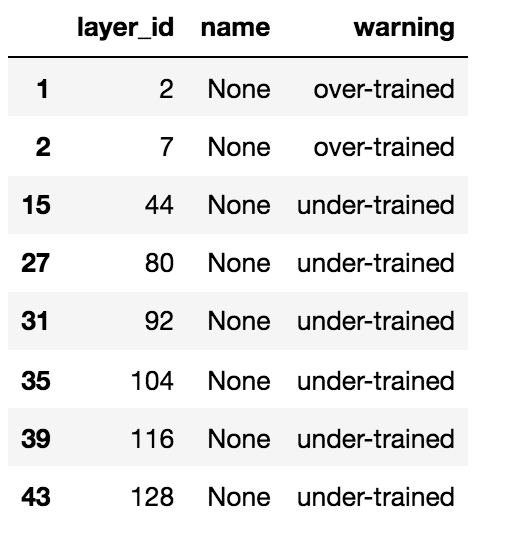

WeightWatcher produces a pandas dataframe, details, with layer metrics describing your model. In particular, the details dataframe contains layer names, ids, types, and warnings.

Here is an example, an analysis of the OpenAI GPT model (discussed in our recent Nature paper)

import transformers

from transformers import OpenAIGPTModel,GPT2Model

gpt_model = OpenAIGPTModel.from_pretrained('openai-gpt')

gpt_model.eval();

watcher = ww.WeightWatcher(model=gpt_model)

details = watcher.analyze()

The details dataframe now includes a wide range of information, for each layer, including a specific warnings columns

details[details.warning!=""][['layer_id','name','warning']]

For comparison, below we show the same details deatframe, but for GPT2. GPT2 is the same model as GPT, but trained with more and better data, Being a much better model, GPT2 has far fewer warnings than GPT.

That’s all there is to it. WeightWatcher provides simple layer metrics for pre-trained Deep Neural Networks, indicating simple warnings for which layers are over-trained and which layers are under-trained.

Give it a try. We are looking for early adopters needing better, faster, and cheaper AI monitoring. if it is useful to you , please let me know.

Hi Charles, noob question but does it also work for non deep learning models? Or can we tweak it to work for other models? Thanks

LikeLike

No, this is specifically for large scale, production quality Deep Learning models, such as the latest transformer models.

LikeLike

Thanks for replying. I read the paper, very enlightening. Do you know something similar for non DL models? Thanks

LikeLike

No I do not. This is a completely new theory, with no foundation in the traditional machine learning literature. For the non-DL models, It is something I will need to work on.

LikeLike

Thanks. Do you think this theory can be applied to normal tree based models? I will definitely read the paper as well but wanted to know your thoughts

LikeLike